Workbench with Slurm and Apptainer (formerly Singularity)

Introduction & Motivation

Workbench supports launching sessions into external cluster resource managers, including Slurm on High Performance Computing (HPC) clusters.

Outside of HPC environments, Docker containers are a common tool for reproducing software environments. Very often they are run with Kubernetes as the orchestration engine. In an HPC cluster, however, Docker containers are problematic. They need elevated privileges to build, start, or stop. On an HPC cluster, that would mean giving end-users these permissions, which could put the stability of the HPC cluster at risk.

In order to mitigate that risk, Singularity (or Shifter as an alternative technology) bring some of the reproducibility benefits of containers, while mitigating the risk of Docker containers by running entirely in user space.

Singularity joined the Linux Foundation in 2021 and was renamed to Apptainer. Given the familiarity with the name Singularity this document will subsequently refer to Singularity rather than Apptainer.

What Docker is for Kubernetes, Singularity is for HPC.

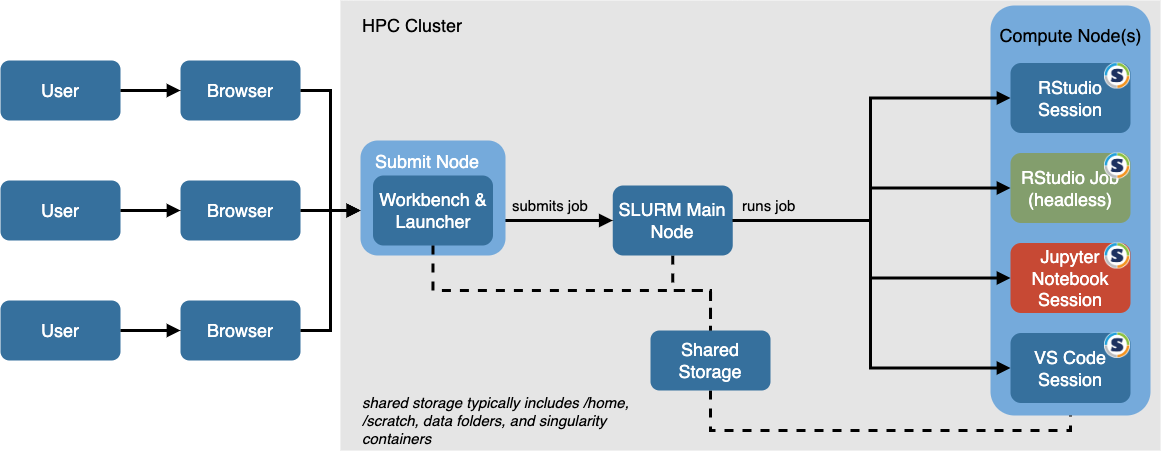

General idea

The outline below provides a suggested approach for implementing Singularity with Launcher and Slurm. This can also be extended to support an environment with both Docker and Kubernetes as well as Singularity/Slurm/HPC. Detailed instructions can be found at the following GitHub repo: https://github.com/sol-eng/singularity-rstudio.

- Utilize the SPANK architecture of Slurm for Singularity integration via an custom plugin for Singularity.

- Use the existing Workbench Docker Image and r-session-complete Docker Image and add HPC/Slurm specific customization to create an Singularity container for conveniency.

- Modify the Slurm Launcher configuration in Workbench to allow for a custom field with the name of the Singularity container.

- Run Workbench on a submit node (Docker container suggested, maintained by sys-admins).

It is also possible to create r-session-complete containers from scratch. In any case Slurm modifications need to be added (e.g. line 79 in the GitHub repo)

A reference architecture looks like the one below.

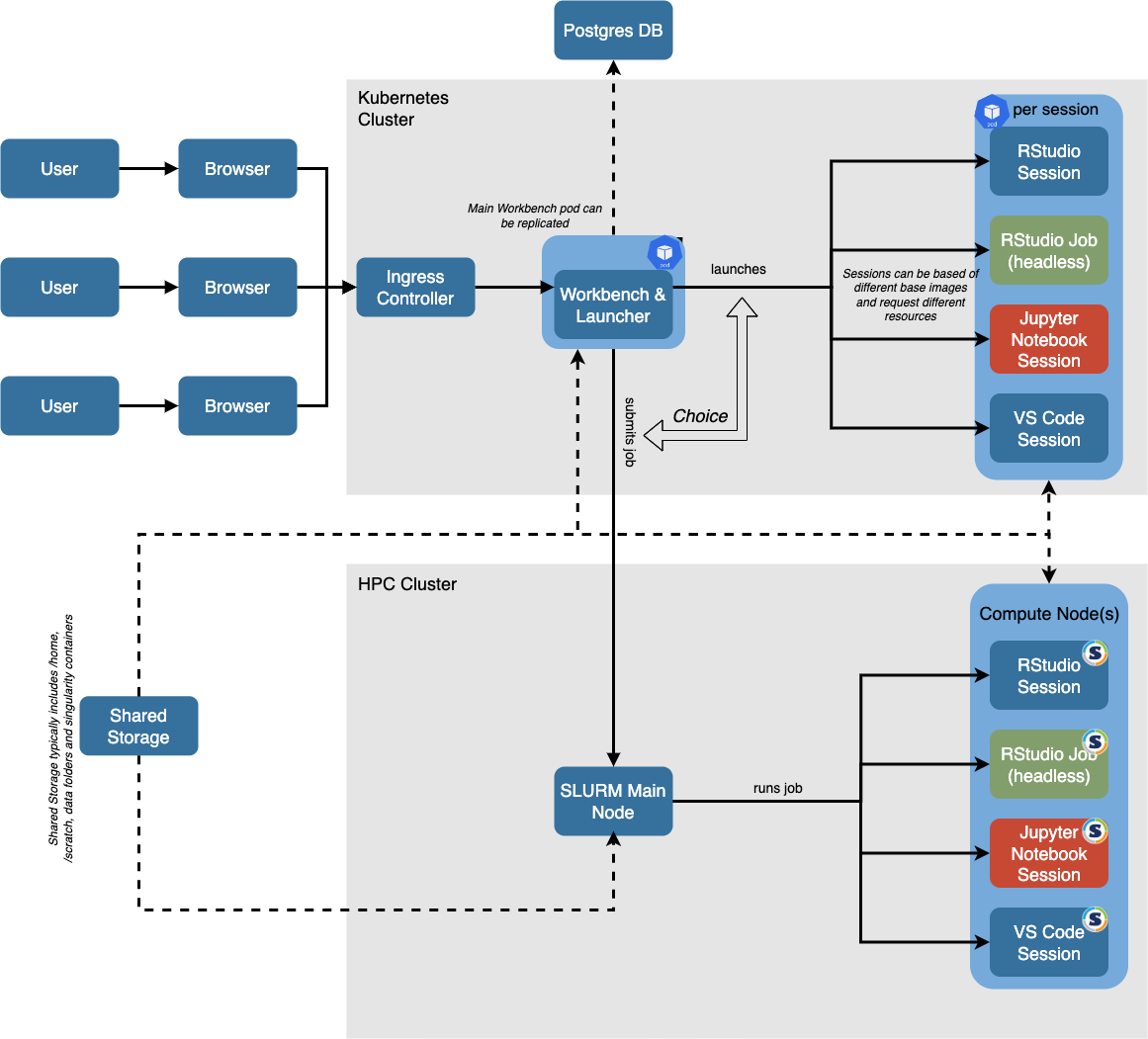

Unifying Kubernetes and HPC - the best of both worlds

Many customers have a Kubernetes platform as well as an HPC cluster. Using Singularity inside the HPC cluster is often the best way to make use of both platforms.

For Kubernetes a Docker container for both the Workbench and the R Session is needed. The Docker container for the R session can directly be used in Singularity following the Slurm customizations and/or converted into a Singularity image file.

A reference architecture that includes both HPC with Singularity and Kubernetes is shown below.